How to Achieve Continuous Delivery Using Cloud Pipelines

In today’s fast-paced software development landscape, Continuous Delivery (CD) has become a cornerstone for organizations aiming to release software updates frequently and reliably. Cloud pipelines, leveraging the power of cloud computing, offer a robust and scalable solution for implementing CD. They provide the infrastructure, tools, and automation necessary to streamline the software delivery process, enabling faster release cycles, improved quality, and reduced risk. This article will delve into the intricacies of achieving Continuous Delivery using cloud pipelines, exploring the key concepts, best practices, and essential tools.

The transition to Continuous Delivery is not merely a technological shift; it’s a cultural transformation that requires a commitment to automation, collaboration, and continuous improvement. Cloud pipelines facilitate this transformation by providing a centralized platform for managing the entire software delivery lifecycle, from code commit to production deployment. By automating repetitive tasks, such as building, testing, and deploying software, cloud pipelines free up developers to focus on innovation and problem-solving.

This article will guide you through the essential steps involved in setting up a Continuous Delivery pipeline in the cloud. We’ll cover everything from selecting the right cloud provider and tools to designing an effective pipeline architecture and implementing robust testing strategies. Whether you’re just starting your CD journey or looking to optimize your existing pipeline, this guide will provide you with the knowledge and insights you need to succeed. We will also address some common challenges and how to overcome them, ensuring a smooth and successful implementation of Continuous Delivery using cloud pipelines.

Understanding Continuous Delivery and Cloud Pipelines

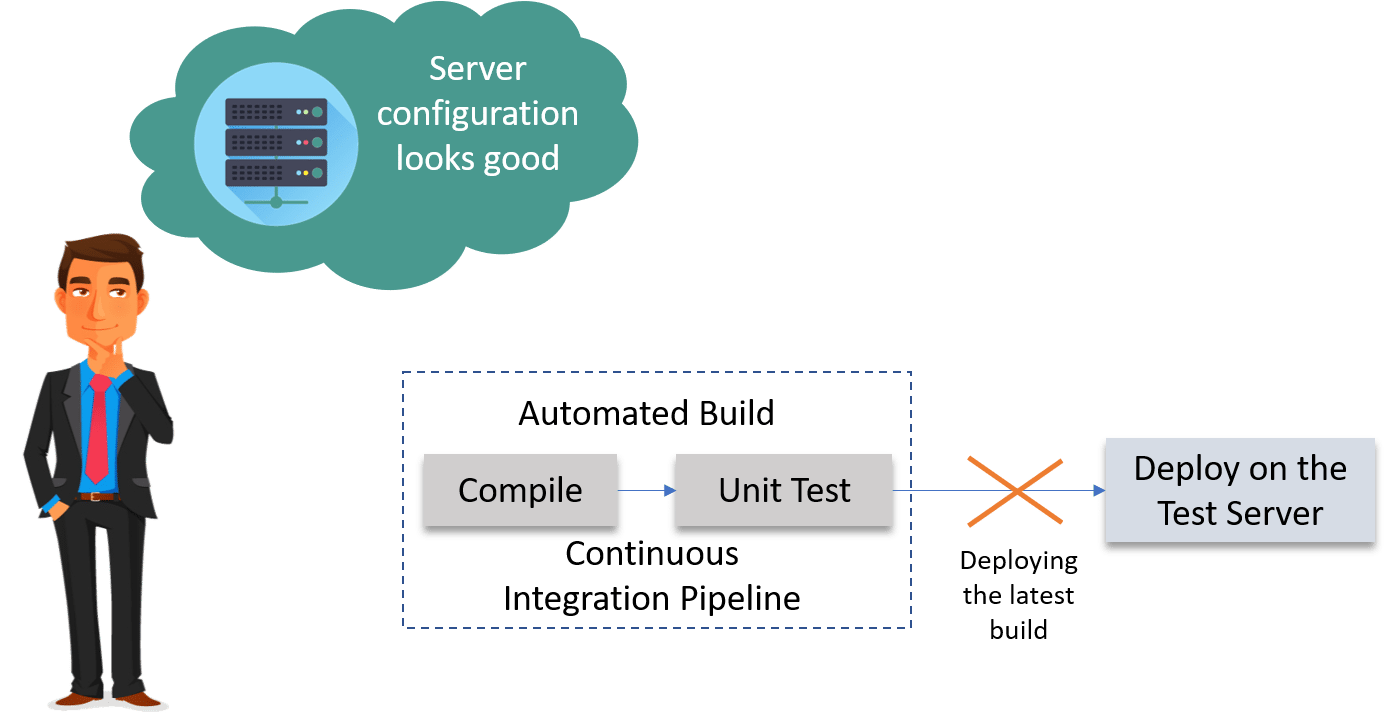

Continuous Delivery (CD) is a software development practice that focuses on automating the release process, allowing for frequent and reliable delivery of software updates to production. It builds upon Continuous Integration (CI) by automating the steps required to release software to production, including testing, configuration, and deployment. The goal of CD is to ensure that software is always in a deployable state, making it possible to release new features and bug fixes quickly and easily.

What is a Cloud Pipeline?

A cloud pipeline is a set of automated processes that orchestrate the building, testing, and deployment of software applications in a cloud environment. It leverages cloud services, such as compute, storage, and networking, to provide a scalable and flexible infrastructure for managing the entire software delivery lifecycle. Cloud pipelines typically include stages for code integration, automated testing, artifact creation, and deployment to various environments, such as staging and production.

Benefits of Using Cloud Pipelines for Continuous Delivery

Cloud pipelines offer several significant advantages for implementing Continuous Delivery:

- Increased Speed and Agility: Automating the release process reduces manual effort and accelerates the delivery of software updates.

- Improved Quality: Automated testing and quality checks ensure that software is thoroughly tested before being released to production.

- Reduced Risk: Automated deployments and rollbacks minimize the risk of errors and downtime.

- Scalability and Elasticity: Cloud infrastructure provides the ability to scale resources up or down as needed, ensuring that the pipeline can handle fluctuating workloads.

- Cost Savings: Automation reduces manual effort and eliminates the need for dedicated infrastructure, leading to cost savings.

- Enhanced Collaboration: Cloud pipelines provide a centralized platform for managing the entire software delivery lifecycle, fostering collaboration between developers, testers, and operations teams.

Designing Your Cloud Pipeline Architecture

Designing an effective cloud pipeline architecture is crucial for achieving Continuous Delivery. The architecture should be tailored to the specific needs of your organization and the characteristics of your software applications. Here are some key considerations:

Choosing the Right Cloud Provider

The choice of cloud provider is a critical decision that will impact the overall performance, cost, and scalability of your cloud pipeline. Popular cloud providers include:

- Amazon Web Services (AWS): Offers a comprehensive suite of cloud services, including AWS CodePipeline, AWS CodeBuild, and AWS CodeDeploy.

- Microsoft Azure: Provides a robust platform for building and deploying applications, including Azure DevOps and Azure Pipelines.

- Google Cloud Platform (GCP): Offers a range of cloud services for software development and deployment, including Cloud Build and Cloud Deploy.

When choosing a cloud provider, consider factors such as pricing, performance, security, compliance, and the availability of relevant services and tools. As the sun broke through the early morning cloud, the landscape began to reveal itself in greater detail

.

Defining Pipeline Stages

A cloud pipeline typically consists of several stages, each responsible for a specific task in the software delivery process. Common pipeline stages include:

- Source Code Management: This stage involves storing and managing the source code of your application. Tools like Git and GitHub are commonly used.

- Build: This stage compiles the source code into executable artifacts. Tools like Maven, Gradle, and npm are used for building.

- Test: This stage runs automated tests to verify the quality of the software. Various testing frameworks like JUnit, Selenium, and Jest are used.

- Package: This stage packages the built artifacts into a deployable format, such as a Docker image or a zip file.

- Deploy: This stage deploys the packaged artifacts to various environments, such as staging and production. Tools like Kubernetes and Docker Compose are used for deployment.

- Release: This stage makes the deployed software available to users. This often involves updating DNS records, configuring load balancers, and monitoring the application’s performance.

Implementing Infrastructure as Code (IaC)

Infrastructure as Code (IaC) is the practice of managing and provisioning infrastructure through code, rather than manual processes. IaC allows you to define your infrastructure in a declarative manner, making it easy to automate the creation, configuration, and management of cloud resources. Tools like Terraform, AWS CloudFormation, and Azure Resource Manager are commonly used for IaC.

Implementing Automation in Your Cloud Pipeline

Automation is the key to achieving Continuous Delivery. By automating repetitive tasks, you can reduce manual effort, improve efficiency, and minimize the risk of errors. Here are some key areas to focus on when implementing automation in your cloud pipeline:

Automated Build Processes

Automating the build process ensures that software is built consistently and reliably. This involves configuring build tools to automatically compile source code, run tests, and package artifacts whenever changes are committed to the source code repository. Tools like Jenkins, GitLab CI, and CircleCI can be used to automate the build process.

Automated Testing

Automated testing is crucial for ensuring the quality of your software. This involves writing automated tests that cover various aspects of the application, such as unit tests, integration tests, and end-to-end tests. These tests should be run automatically as part of the pipeline, providing feedback on the quality of the software early in the development process. Tools like Selenium, JUnit, and Jest are used for automated testing.

Automated Deployment

Automated deployment ensures that software is deployed to various environments consistently and reliably. This involves configuring deployment tools to automatically deploy packaged artifacts to staging and production environments whenever a new version is released. Tools like Kubernetes, Docker Compose, and AWS CodeDeploy can be used for automated deployment.

Configuration Management

Configuration management involves managing the configuration of your infrastructure and applications in a consistent and automated manner. This ensures that your environments are configured correctly and that changes are applied consistently across all environments. Tools like Ansible, Chef, and Puppet are used for configuration management.

Monitoring and Logging

Effective monitoring and logging are essential for identifying and resolving issues in your cloud pipeline. By monitoring the performance of your pipeline and applications, you can detect problems early and take corrective action before they impact users. Here are some key aspects of monitoring and logging:

Real-time Monitoring

Real-time monitoring provides insights into the performance of your pipeline and applications in real-time. This allows you to quickly identify and address issues as they arise. Tools like Prometheus, Grafana, and Datadog can be used for real-time monitoring.

Centralized Logging

Centralized logging involves collecting and aggregating logs from all components of your pipeline and applications into a central location. This makes it easier to search and analyze logs for troubleshooting and debugging. Tools like Elasticsearch, Logstash, and Kibana (ELK stack) and Splunk can be used for centralized logging.

Alerting and Notifications

Alerting and notifications are used to automatically notify you when issues are detected in your pipeline or applications. This allows you to respond quickly to problems and minimize downtime. Tools like PagerDuty and Opsgenie can be used for alerting and notifications.

Security Considerations

Security is a critical aspect of Continuous Delivery and cloud pipelines. It’s essential to implement security measures at every stage of the pipeline to protect your software and data from unauthorized access and threats. Here are some key security considerations:

Secure Code Management

Secure code management involves implementing security measures to protect your source code from unauthorized access and modification. This includes using strong authentication, implementing access controls, and regularly scanning your code for vulnerabilities. Tools like SonarQube and Snyk can be used for code scanning.

Secure Build Process

Secure build process involves ensuring that the build process is secure and that only authorized users can build and deploy software. This includes using secure build tools, implementing access controls, and regularly scanning your build environment for vulnerabilities.

Secure Deployment

Secure deployment involves ensuring that the deployment process is secure and that only authorized users can deploy software to production. This includes using secure deployment tools, implementing access controls, and regularly scanning your deployment environment for vulnerabilities. It is also important to follow the principle of least privilege when granting access to resources.

Common Challenges and Solutions

Implementing Continuous Delivery using cloud pipelines can present several challenges. Here are some common challenges and potential solutions:

Resistance to Change

Challenge: Teams may resist adopting new processes and tools. Solution: Provide training and support to help teams understand the benefits of Continuous Delivery and cloud pipelines. Emphasize the improvements in efficiency, quality, and speed.

Complexity

Challenge: Setting up and managing a cloud pipeline can be complex. Solution: Start small and gradually add complexity as needed. Use pre-built templates and modules to simplify the process. Consider using a managed cloud pipeline service to reduce the operational overhead.

Integration Issues

Challenge: Integrating different tools and services can be challenging. Solution: Choose tools and services that are compatible with each other. Use APIs and webhooks to integrate different components of the pipeline. Consider using a platform that offers built-in integrations.

Testing Challenges

Challenge: Writing and maintaining automated tests can be time-consuming. Solution: Invest in test automation frameworks and tools. Prioritize writing tests for critical functionality. Encourage developers to write tests as part of the development process.

Conclusion

Achieving Continuous Delivery using cloud pipelines is a journey that requires careful planning, execution, and continuous improvement. By understanding the key concepts, best practices, and essential tools, you can build a robust and scalable pipeline that enables you to deliver software updates frequently and reliably. Remember to focus on automation, collaboration, and security, and to continuously monitor and optimize your pipeline to ensure that it meets the evolving needs of your organization.

The benefits of Continuous Delivery are undeniable, including faster release cycles, improved quality, reduced risk, and increased agility. By embracing cloud pipelines, you can unlock these benefits and transform your software development process.

As you embark on your Continuous Delivery journey, remember that it’s not just about the technology; it’s also about the culture. Foster a culture of collaboration, communication, and continuous improvement to ensure that your team is aligned and motivated to achieve success. With the right tools, processes, and mindset, you can achieve Continuous Delivery and deliver exceptional value to your customers.

Frequently Asked Questions (FAQ) about How to Achieve Continuous Delivery Using Cloud Pipelines

What are the key steps involved in setting up a robust continuous delivery pipeline using cloud-based services like AWS, Azure, or Google Cloud?

Setting up a continuous delivery pipeline in the cloud involves several key steps. First, establish a version control system (e.g., Git) and a build automation tool (e.g., Jenkins, GitLab CI/CD, AWS CodePipeline, Azure DevOps Pipelines, or Google Cloud Build). Next, create automated tests (unit, integration, and end-to-end) to ensure code quality. Implement a staging environment that mirrors production for thorough testing. Automate the deployment process using infrastructure-as-code tools (e.g., Terraform, CloudFormation) to provision resources consistently. Finally, establish monitoring and alerting to detect and resolve issues quickly in production. Cloud providers offer managed services that simplify these steps, providing pre-built integrations and scalability. Remember to prioritize security throughout the pipeline.

How can I effectively automate database schema changes and data migrations as part of my continuous delivery pipeline when deploying applications to the cloud?

Automating database schema changes and data migrations is crucial for a successful continuous delivery process. Use database migration tools like Flyway, Liquibase, or Alembic to manage schema changes in a version-controlled manner. Integrate these tools into your cloud pipeline. Create migration scripts that can be applied automatically during deployment. Use a database-as-code approach to define your database schema in code, allowing you to version and manage it along with your application code. Implement rollback strategies to revert changes in case of failures. Test your database migrations thoroughly in a non-production environment before applying them to production. Consider using blue-green deployments or canary releases for database changes to minimize downtime and risk.

What are the best practices for ensuring security and compliance within a continuous delivery pipeline when deploying applications and infrastructure to cloud environments?

Security and compliance are paramount in a continuous delivery pipeline. Implement security scanning tools (e.g., static analysis, dynamic analysis, vulnerability scanning) to identify vulnerabilities early in the development process. Automate security testing as part of the pipeline. Use infrastructure-as-code to enforce security best practices and compliance policies. Implement access control and authentication mechanisms throughout the pipeline. Store secrets securely using a secrets management solution (e.g., HashiCorp Vault, AWS Secrets Manager, Azure Key Vault, Google Cloud Secret Manager). Regularly audit your pipeline and infrastructure for compliance with relevant regulations (e.g., GDPR, HIPAA, PCI DSS). Implement automated compliance checks to ensure ongoing adherence to policies. Integrate security into every stage of the cloud pipeline to shift security left.